May this be a warning for everyone else, who would decide to blindly trust Microsoft with it’s data.

Disclaimer: Managing array of disks joined into single volume is, until this day, an actual career within IT. It is a serious job with serious requirements and serious responsibilities. It is not wise to think that an end consumer with few drives of questionable condition can reliably turn these into a functioning array and trust this array with all of their data. There is a lot of stuff that toys like Storage Spaces will allow you to forgo, ignore and not be responsible for. What we’re doing here is prosumer grade. It’s not professional, or enterprise-grade. Keep this in mind. Read more about general rules for data storage and data backup. I’m not kidding, if you plan to trust any kind of disk array with your family photos, get knowledge about how to do this properly.

So originally what I would do is one of the smarter decisions of this story. I would move my data on two 6TB WD REDs in my gaming setup, that worked in RAID1. I decided not to touch this, until the NAS is built and I am confident in it’s ability to hold data.

I purchased Fractal Design Node 804 case, and I started building. The original setup was built around Intel Pentium G5400, which had really good reviews for use with Plex. For the price, it was a gem. Then I went and bought additional 6TB disks, 3 of them. I would put all of this together in a following configuration:

| MOBO | Gigabyte B365M D3H |

| CPU | Intel Pentium G5400 |

| RAM | 2x8GB DDR4 non-ECC memory |

| SSD | 1x 256GB Samsung SATA SSD |

| HDD | 4x 3TB WD RED EFRX + 3x 6TB WD RED EFRX |

| ACC | Marvell SATA PCIe expansion card |

One always appreciates the view into Node 804’s HDD bays:

Windows and drivers installed without a problem, Storage Spaces pool was set up with all HDDs in it and I would begin to test it. It went really well. I was able to recover from disks being unplugged, PC being turned off from PSU switch, I tried all kinds of torture and SS behavior seemed predictable. I would eventually find out about it’s performance issues and the requirements for aligned interleave sizes and all seemed to be well.

The next step seemed logical – move some data onto it. This was tricky, because most of my data was encrypted on the original machine. I decided to trust BitLocker with data encryption. This setup is supported, since Storage Spaces would only provide volume, but doesn’t really care about the partitions on it. After few afternoons of playing around with this configuration, I’ve executed a copy job to move 6TB worth of data from my old PC array to my new NAS array. This took a while, but it eventually finished. Shares were set up, Plex was configured, it all worked and I was happy with my build. It all went so well in fact, that I broke my RAID-1 array and inserted one of the 6TB units into the NAS and introduced it into the pool. Windows formatted the unit, added it to the pool and started optimizing the pool so that the amount of data stored on each drive is roughly equal. The job finished in few hours and the operation was a success. My little NAS would now hold 7 mechanical disks and 1 SATA SSD. After few days, I decided I want to move on and begin update on my gaming build, as NAS was already finished and worked well. I took my 1 remaining 6TB unit (the only remaining unused drive with backup of all my data) and placed it into the NAS. Maybe it was years of experience in IT, the countless fuckups or experience with inevitable dooms of anything electronic, I did not wipe that drive. In fact, I placed it in the front compartment of Node 804 and did not plug it in. “If everything else fails, this holds a copy of all the data.” My gaming build was taken apart at this point and I was thinking about how am I going to go about upgrades. I noticed that the Fractal Design Define R5 I used did not have any fans in the bottom part of it’s front side, so there was no airflow going over most of the HDDs for years that I have been using them. This made me realize I did not care much about cooling in my new NAS. Given the amount of disks I operated at that point, I decided it warrants purchase of HDTune Pro, which I used to monitor SMART values and temperatures. They held around ~49 degrees Celsius. Not great, not terrible.

Days went past and my gaming setup was still in pieces. I had lot of work and it was convenient for me to come home, watch movies on Plex and fall asleep. Eventually, during one of my routine check ups, I noticed HDTune is reporting a lot of issues with CRC error check in SMART, with one particular disk.

CRC Error Check in SMART

This reading would be the first sound of death bell, but I didn’t know it yet. I realized I have no way to identify individual disks in the case. I know what drive is having issues based on the serial number and information on the screen, but I don’t know which disk that is in the case and which SATA port it occupies. Well, not to worry, I powered down the NAS, unplugged all drives and began to take notes about location of each of them. Thinking back, I can hardly believe I was so reckless. Not only was this machine not backed up, but I was completely oblivious to risks this procedure entailed. Unplugging and plugging disks comes with a risk of wire being punctured, bent, or otherwise failing, or connection not being made properly. At that time, I was protected against single-disk failure and a single disk was already having issues communicating. This was not good, but I didn’t realize until it was too late. So I figured what drive was having problems, I reseated it’s connections and I turned the computer back on. Windows booted up, Storage Spaces mounted up, Bitlocker unlocked. I fired up HDTune and started monitoring the CRC error value. It kept going up. “Hmm.” Maybe I’ll need to change the cable. The cable. At the time, I was using AliExpress-sourced multicables, that looked nice and saved a lot of space in the case.

Not to worry, I bought more of them (everything on Ali is so cheap, right?), so I was able to replace the faulting one. So I powered down, unplugged whole rail of disks and replaced the cable. I made sure everything is plugged back correctly and fired up the NAS. I let it warm up for a while and went back to HDTune SMART view. Affected disk was not reporting additional CRC errors and all seemed fine. “Problem solved.” Anyway, I went about my day and came back eventually because I realized – if that cable was faulty this soon after being bought, is the new cable maybe also faulty? I started HDTune and started checking individual drives. 3 different drives were reporting CRC errors now, including the one I knew about. “Mkay.” The one I knew about was fine, the number was the same. But the two others were concerning. I had no way of knowing whether this was also caused by previous cable, or whether the errors come from the new cable. After a while of refreshing, I realized these two drives keep reporting CRC errors and the numbers are going up. “I wonder how the pool is doing with this?” I fired up the Storage Spaces console. The pool was not green or yellow. It was red.

“Fuck.”

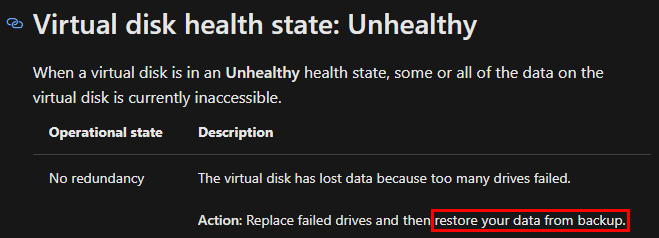

What now. This was going to be a data recovery night, or so I thought. I found some data recovery tools, that were platform independent (“just read everything on the disk”) and some, that were supposed to help with Storage Spaces. Through PowerShell, I would come to find the status of the virtual disk was “Unhealthy.” That doesn’t seem so bad, maybe it’s a little worse than degraded, since the data isn’t accessible, but maybe there’s a way to mount it in last working configuration? Here is what the official documentation has to say about “Unhealthy”:

“Fuck.“

Storage Spaces cmdlets in PowerShell really won’t let you do anything at this point. The entire product does not come with any recovery tools. Once you’re done, you’re done. 3rd party recovery route it was.

Anyway, there are tools that have emerged since Storage Spaces was introduced and I tried using those, but there was one thing nobody really expected to see on a Storage Spaces volume: BitLocker. Encryption threw every single tool off, because nothing was readable on the volume I was trying to recover. I was getting desperate. The next day I realized that even if I could at least partially recover the array, I have nowhere to copy the data. All my disks were in that NAS. What now?

That’s when I went out and bought fifteen 2TB units that someone was getting rid of, after they served in a data center for 5 years. Interestingly, during those 5 years, these units have logged 3 spin ups in their SMART. Anyway, they were letting them go for $20 bucks a piece so I took them all. “At least I will have spares.” My gaming PC was quickly repurposed to secondary data warehouse.

I was trying to avoid being dumb, so during this rebuild I made sure to not use the AliExpress SATA cable and avoid having the same issue. This setup used slim SATA cables from AKASA. They are pricey, but I thought they are good quality and some engineering had to go into them in order to make them this bendable and small.

Windows was reinstalled, Storage Space was set up, everything was ready to receive recovered data.

Eventually I would come to find that there is no way to recover the crippled array. No tool was able to punch through broken parity array that had BitLocker on it. It was simply too complex of a failure. At that point I realized my setup was not in any way common, so advice on how to recover will be scarce and most of what existed on the internet was of no use to me. Complete failure.

Carefully, I connected my last remaining copy of data on a single 6TB unit and started copying all of it to my new data warehouse operation in the gaming PC. I figured that whatever happens, since this single disk now holds my lifetime worth of digital content, it was promoted back from backup to production and hence needed to be backed up. This went fine, but this copy of my data was several weeks old. I have lost weeks worth of data. Work, family photos, personal projects. Everything from my recent history was unrecoverably lost. Along with several TB of media content I was able to download in that period.

I felt like a dumbass. I was a dumbass. How can a seasoned software developer, someone who works in IT, ever lose any data at all? This only happens to BFUs, right?

Anyway, it was now a question of what I do next. I threw all of the AliExpress cabling away and rebuilt the array using the AKASA cables. At the time, I thought spending extra on these makes sense. Not only that, I realized my NAS needs a backup and it has nowhere to really back up. Before the array got unhealthy, I had over 10TB of data there that I would need to backup somewhere else. At first, I thought about backing up to my repurposed gaming machine. This was quickly dismissed, because I kept turning it off in the evenings and the backup wouldn’t go through. If multiple machines tried to back up, it would saturate the NIC and games would lag. Not optimal. I realized that I’m doing things wrong – a gaming PC is not a NAS. A NAS is not a backup server. I was combining use cases that should not be combined. As a quick & dirty solution, I created a separate Storage Space pool with four 3TB units, I’ve set them up in stripe configuration and used it as a backup of the main pool with four 6TB disks. Mirroring two pools is fast, it provided reasonable temporary backup solution, even if the secondary pool was over 90% full and it was clear I have maybe few weeks before it has no space to hold all of the data. It was at this point I realized I need another NAS. A unit that only holds backups and does nothing else. If anything breaks on my primary NAS or any other computer in the household, I want to be sure that no data is at risk, because even if I throw that entire PC or device into trash, it’s data will have been backed up to a different machine that has it’s own redundancy and runs independently. Before, I couldn’t justify this investment, but after many sleepless nights, I realized there is no price too high to avoid going through this again.

And so the new primary NAS was born. I decided not to sell PC parts from my gaming PC that were left over after I upgraded it to Ryzen 3700X and RTX 2070S and instead use these for new primary NAS. A new primary NAS had to have additional HDDs, right? I got a deal on Seagate 10TB CMR drives. I ended up buying nine Seagate Barracuda Pro 10TB, 3.5”, SATAIII, 7200rpm, ST10000DM0004. I think the price at the time was $200 per unit and they have been used for several months in a Synology enclosure to record security footage. Great! To reiterate, this is the state of available storage devices now:

| HITACHI ENTERPRISE | 2TB | 15x |

| WD RED/EFRX | 3TB | 4x |

| WD RED/EFRX | 6TB | 5x |

| SEAGATE BARRACUDA PRO | 10TB | 9x |

It looked a little weird in my home office corner for while:

Building and data management was in full swing. Eventually, three different PCs were build in a short span. Here are the builds:

NAS #1 (PRIMARY NAS)

| MOBO | ASRock B250M Pro4 |

| CPU | Intel Core i5 6600K |

| RAM | 2x8GB DDR4 non-ECC |

| GPU | ASUS GTX 1060 6G |

| SSD | Samsung 970 EVO Plus M.2 256GB |

| HDD | 9x10TB Seagate Barracuda Pro |

| ACC | Marvell SATA PCIe Expansion Card |

NAS #2 (BACKUP NAS)

| MOBO | GIGABYTE B365M D3H |

| CPU | Intel Pentium G5400 |

| RAM | 2x8GB DDR4 non-ECC memory |

| GPU | Palit GTX 960 4G |

| SSD | Samsung SATA SSD 256GB |

| HDD | 4x3TB WD RED EFRX + 3x6TB WD RED EFRX |

| ACC | Marvell SATA PCIe Expansion Card |

Primary NAS has a purpose to hoard data and run Plex. Secondary NAS wakes up periodically and mirrors data through shares and ACLs from primary NAS. Storage pool on the gaming PC was left in there, just so I can see if it will work years later. It’s mostly used to store memes for discord conversations. So far, no memes have been lost.

But the nightmare wasn’t over yet. Shortly after all of this was deployed around my apartment, I started getting intermittent issues with both NAS units and even my gaming setup. Those pesky CRC errors started popping up again! Well, it turns out that those AKASA slim SATA cables are really bad. They fail all the time, without warning and are so badly manufactured, that even touching one in a working computer while performing maintenance might cause it to fail. I have since been replacing those as they kept failing, but no data was lost since due to the backup scheme I have orchestrated. For a total peace of mind, I’m also running an offsite backup to completely stick to the 3-2-1 backup standard. Life is good.

Lessons learned

These lessons come from 2 years-back-old-me, so I’m working from memory. But to summarize, this was what I learned back then:

Nobody cares about Bitlocker. Seriously, a NAS shouldn’t use it. In fact, optimally, a NAS shouldn’t be running on Windows, anyway. There are much better options to protect my data, which I have gladly adopted. Without sharing too much about my scheme, I would suggest to everyone to give VeraCrypt a try. There’s usually no need to encrypt all of your movies, so the amount of stuff that deserves protection is usually limited. If you have so much stuff that needs protection, then Bitlocker is not a solution. You should look into Linux and figure out what it is that you really require. Using Bitlocker complicates things on server. No how-to or troubleshooting guide expects it present on the affected machine. No third party data management or recovery tool has any support for it and any data recovery is impossible, since the data is scrambled by design. BitLocker is end-user encryption tool that should be deployed on a laptop and it protects your or company data if it ever gets stolen or lost. That’s it.

Do not mix incompatible purposes. Gaming/NAS is nonsense. My current gaming setup is performing great and I’m super happy with it. It also does nothing else besides gaming, so I’m not worried about security. All those games come as .exe files running under administrative privileges doing whatever they can. Additionally, games regularly use and install anti-cheat tools that also run under administrative or even system account, collecting data about whatever they want. A dedicated machine to hold all this cancer without being worried about my storage pool feels nice. Secondary NAS only serves as a dump that runs once every two days and creates incremental backups of everything on a primary NAS. I didn’t have to touch that unit in it’s entire lifetime, until the recent maintenance mentioned above.

Cables. Use good ones. As you may have noticed, I encountered two completely different and unrelated models of SATA cables that die unexpectedly and arrive DOA on regular. After the massive fail with AliExpress multi SATA cables, I realized how stupid it was to use them in the first place. I spared no expense on buying large quantities of the AKASA cables, thinking more price means more better. Wrong again, those cables should be illegal, that’s how shit they are. I learned the hard way that people use regular, fat-sleeved SATA cables for a reason.

Storage Spaces are not consumer ready. Running three Storage Spaces instances in those two years convinced me that it is a capable tool. All of those instances, however, still require maintenance that has to be run from PowerShell. I can’t imagine to ever recommend to an average Joe that they can safely use Storage Spaces without worrying about their data. The parity implementation when used through UI is absolutely terrible and suggesting to any user to use drive pools is questionable at best. There is nothing like “accidentally deleting a folder” with arrays. If you fuck up, it’s all gone irreversibly. Whenever I’m thinking maybe I could deploy this somewhere, I remember about my parents in their fifties collecting surprising amount of pictures from their travels. They actually go through these with their peers and I can’t imagine they would suffer a data loss that would take all of this away from them. See, at 30, if you lose everything, you have a lot of time to rebuild it. At 55 after beating cancer and with questionable prognosis, you simply can’t afford to lose your digital wealth, since many of those moments or opportunities you won’t have time to relive again. I have automated backups set up for them that are often more paranoid than mine. At no point would I ever risk deploying Storage Spaces there. SS will not even send you an email when stuff breaks. It’s simply not suited for regular users.

Hi great article. I thought a software NAS solution was great because you can just install the software on a new PC and go from there if your hardware gets spelunked. An old NAS may be manufactured or found spare parts for.

But Storage Spaces, SoftRAID and Drivepool made me dissapointed. I ended up deciding on SS but I will keep an eye on it.